Artificial Intelligence (AI) has become an increasingly important aspect of modern technology, and its applications are vast, ranging from chatbots to autonomous vehicles. However, as the use of AI grows, it’s crucial to consider these technologies’ legal, ethical, and data privacy implications. The deployment of AI can have far-reaching consequences, and it’s important to ensure that the technology is used responsibly and sustainably. With GPT-3, the world’s largest language model developed by OpenAI, these considerations have become even more relevant. In this article, we will discuss the legal implications of GPT-3 and the ethical and data privacy considerations that must be considered when using GPT-3 in a product.

GPT-3’s legal, ethical, and data privacy considerations are important because the model’s vast amount of training data and advanced language capabilities make it capable of generating highly convincing and potentially harmful outputs.

There are concerns about the potential for the model to perpetuate biases and misinformation, as well as the privacy of individuals whose data was used in the model’s training. It is important for organizations using GPT-3 to be aware of these considerations and to implement proper safeguards to ensure responsible use of the technology and the protection of individuals’ rights. It is also very important to review your product against the OpenAI Use Case Guidelines to ensure that it is aligned with OpenAI’s legal implications of GPT-3 so that you can save time and effort on building something that will not be approved for public release.

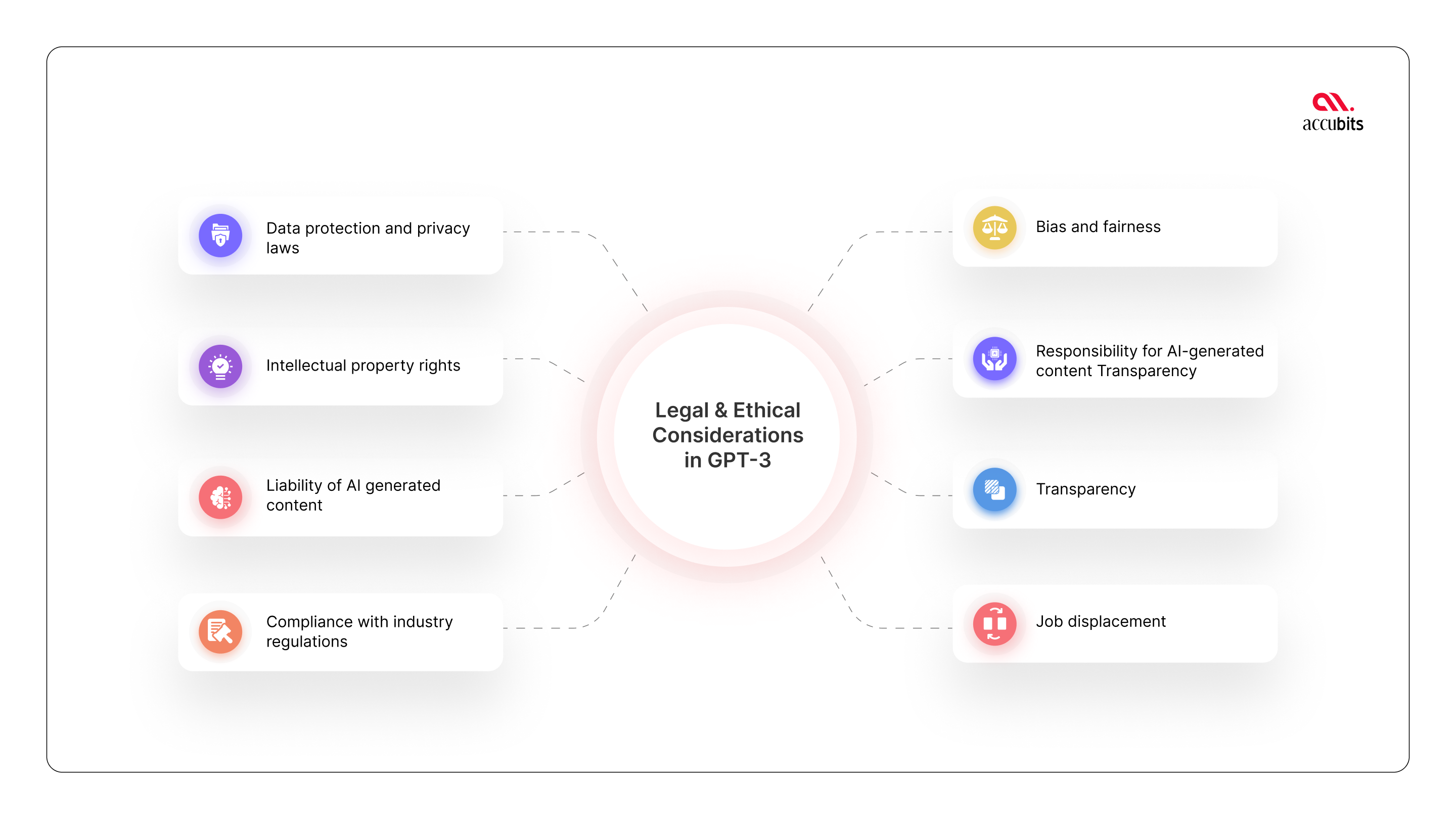

When using GPT-3 to build a product, it’s important to consider the legal implications to ensure compliance with relevant laws and regulations. Some legal implications of GPT-3 to keep in mind include the following:

Organizations must ensure that their use of GPT-3 complies with data protection and privacy laws, such as the General Data Protection Regulation (GDPR) in the EU and the California Consumer Privacy Act (CCPA) in the US. This includes obtaining the necessary consent from users for collecting and using their data and ensuring the safe storage of user data. Organizations must also have a clear privacy policy that explains how user data is collected, used, and shared.

Also read – Getting started with GPT-3 model by OpenAI

GPT-3 should not infringe on intellectual property rights, such as patents or copyrights. Organizations should thoroughly review their use of GPT-3 and ensure that it does not infringe on any third-party rights. This includes avoiding GPT-3 for tasks that may infringe on intellectual property rights, such as creating unauthorized copies of copyrighted works.

Organizations using GPT-3 may be held liable for any negative consequences of using AI-generated content, such as defamation or copyright infringement. For example, if GPT-3 generates content that defames an individual, the organization may be liable for the harm caused. Organizations must consider the potential legal risks and implement measures to minimize liability, such as reviewing and filtering AI-generated content before publication.

Organizations using GPT-3 for specific industries, such as finance or healthcare, must ensure compliance with relevant industry regulations. For example, financial organizations may be subject to money laundering and fraud prevention regulations, and healthcare organizations may be subject to patient privacy and data protection. Organizations must be mindful of these regulations and take steps to ensure compliance.

Organizations need to consider the legal implications of GPT-3 and take necessary steps to ensure compliance with relevant laws and regulations. This includes obtaining the necessary consent from users, ensuring the safe storage of user data, avoiding infringement of intellectual property rights, and complying with industry regulations.

Connect with our experts today!

In addition to the legal implications of GPT-3, GPT-3 also raises important ethical concerns. Some ethical AI challenges to keep in mind include the following:

Machine learning models, including GPT-3, can perpetuate existing biases in the data they are trained on. This can lead to biased AI-generated content that is unfair to certain groups. For example, if GPT-3 is trained on biased data, it may generate content that perpetuates harmful stereotypes or discrimination. To minimize bias in AI-generated content, organizations using GPT-3 should intensify their training data and regularly review AI-generated content for fairness.

Related article – How to mitigate AI bias in healthcare applications?

Organizations using GPT-3 are responsible for the content generated by the model, even if it is generated automatically. This means that organizations must be aware of the potential implications of AI-generated content and take steps to ensure that it aligns with their values and does not cause harm. For example, organizations should implement measures to review and filter AI-generated content before it is published and take responsibility for any negative consequences of AI-generated content.

Related article – How Artificial Intelligence Powers Business Data Analytics?

AI systems, including GPT-3, can be difficult for users to understand and can be perceived as opaque. This raises questions about the accountability and transparency of AI-generated content and the ability of organizations to explain how AI-generated content is produced. To promote transparency, organizations should explain how AI systems work and be transparent about their data sources and training processes.

The use of GPT-3 and other advanced AI systems has the potential to automate many tasks previously performed by humans, leading to job displacement. This raises ethical questions about the impact of AI on the workforce and the responsibility of organizations to support affected workers. Organizations should consider the potential impact of AI on the workforce and take steps to support workers who may be displaced, such as retraining programs or other forms of support.

Related article: How to tackle biases in Artificial Intelligence?

Data privacy is a major concern for organizations using advanced artificial intelligence (AI) systems like GPT-3. With the ability to process and store vast amounts of sensitive user data, organizations must take appropriate measures to protect this information. Here, we will explore the various steps organizations can take to ensure the security and privacy of user data when using GPT-3 and other AI systems. From implementing robust security measures to complying with relevant data privacy regulations, here is a comprehensive overview of the key considerations for protecting user data in the age of AI.

Transparency in AI technology is essential for building trust with users and ensuring they know how their data is used. Organizations can promote accountability and responsibility in developing and deploying AI systems by providing clear information about how AI systems work. Transparency helps organizations demonstrate their commitment to responsible data practices, which is especially important in light of recent data privacy scandals and concerns about the misuse of personal data.

In addition to building trust with users, transparency helps to reduce the risk of bias in AI systems. AI systems are only as good as the data they are trained on; if the data is biased, the results will be too. Transparency helps organizations identify and address potential biases in their data and algorithms, which is crucial for ensuring that AI systems are fair and unbiased.

Transparency also improves decision-making by providing users with the information they need to understand and interpret the outputs of AI systems. This is particularly important for applications such as credit scoring or predictive policing, where AI systems make decisions that can significantly impact individuals and communities. By being transparent about the data and algorithms used to make these decisions, organizations can ensure that they are being used responsibly and ethically.

Finally, many jurisdictions have enacted data protection and privacy regulations that require organizations to be transparent about their data practices. By being transparent about their data use and processes, organizations can ensure that they comply with these regulations and treat user data with the respect it deserves. Transparency is a crucial component of AI technology that helps promote accountability, build user trust, reduce bias, improve decision-making, and ensure legal compliance. By being transparent about their data practices and processes, organizations can ensure that they are using AI technology responsibly and ethically, which is essential for promoting the responsible development and deployment of AI systems and protecting user data.

Related article – How to implement Artificial Intelligence in your business and measure its impact?

As we dive into GPT-3 and all its wonders, it’s important to remember that great power comes with great responsibility. When incorporating this state-of-the-art AI technology into your product, three key considerations must be considered: legality, ethics, and data privacy. Regarding the legal implications of GPT-3, ensuring compliance with laws such as data protection regulations and intellectual property rights is crucial. Failing to do so could result in dire consequences for your business.

Regarding ethics, it’s important to ensure that GPT-3 aligns with ethical principles and avoids any biases that may impact your users. This includes avoiding any discriminatory or unjust results. Last but certainly not least, data privacy is of the utmost importance. This means implementing robust security measures to protect user data and obtaining consent before collecting and processing personal information.

In conclusion, as we continue to harness the power of GPT-3, it’s essential to strike a balance between its capabilities and the responsibilities of its use. By being mindful of legal, ethical, and data privacy considerations, we can create products that truly benefit society while respecting our users’ rights.

Connect with our experts today!